Backend infrastructure at Spotify

backend infrastructure at Spotify. Our backend infrastructure is very much work in progress – in some areas we have come a long way and in others we have just started.

In order to understand why we are building this infrastructure we need to cover some background on how Spotify’s development organization works. We are currently around 300 engineers at Spotify – and we are growing rapidly.

Background

Growth – At Spotify, things grow all the time. The number of daily users, the number of backend nodes that power the service, the number of hardware platforms our clients run on, the number of development teams that work with our products, the number of external apps we host on our platform, the number of songs we have in our catalogue.

Speed – As we grow we have to navigate carefully around a lot of things that could bring our development speed down. We make great efforts to eliminate dependencies between teams, and remove unnecessary complexity from our architecture.

Autonomous squads – One key concept at Spotify is that each development team should be autonomous. A development team (or ‘squad’ in Spotify lingo – see http://blog.crisp.se/2012/11/14/henrikkniberg/scaling-agile-at-spotify) should always be able to move independently of other squads. Even if there is a dependency between two squads there is always a way for the dependent squad to move forward. To enable all squads to make progress even though they have dependencies on another squad we have a few strategic ideas that we try to apply everywhere: our transparent code model and our self service infrastructure.

Transparent code model – All the Spotify code is available to all developers in an transparent code model. This means that all code in the Spotify client, Spotify backend and Spotify infrastructure is available to all the developers at Spotify to read or change. If a squad is blocking on some other squad to make a change in some code, they always have the option to go ahead and make the change themselves.

In practice Spotify’s transparent code model works by all teams sharing the same centralized git server. Each git repo has a dedicated system owner that takes care of the code and makes sure it does not rot. The transparent code model makes sure that everyone can make progress all the time, and that everybody has access to everybody’s code. This keeps Spotify going forward all the time and gives an positive and open work environment.

Self service infrastructure – All infrastructure that is needed should be available as a self service entity. That way, there is no need to wait for another team to get hardware, setup a storage cluster or do configuration changes. The Spotify backend infrastructure is built up of several layers of hardware and software, ranging from physical machines to messaging and storage solutions.

Open source – We try to use open source tools whenever possible. Since Spotify is constantly pushing the scalability limits of the software we are using in our backend we need to be able to improve the software we use in critical areas. We have contributed to many of the open source projects we use, for example Apache Cassandra and ZMQ. We use almost no proprietary software simply since we cannot trust that we will be able to tailor it to our ever growing needs.

Culture – At Spotify we believe strongly in empowered individuals. We reflect this in our organization with autonomous teams. For engineers there are many possibilities to move and try working in other areas inside Spotify to ensure that everybody stays passionate about their work. We have hackdays regularly where people can try out pretty much any idea they have.

Architecture

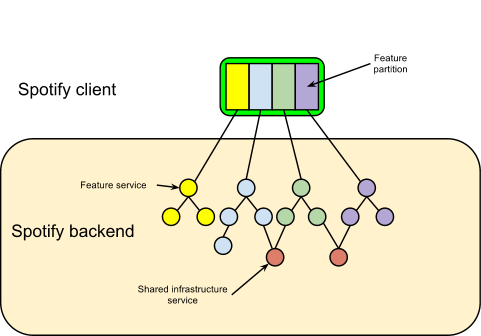

Any architecture that needs to handle the volume of users that Spotify has need to partition the problem. The Spotify architecture partitions the problem in several different ways. Firstly, partitioning by features. A slightly oversimplified description is that all the physical screen area of all the pages and views in our clients is owned by some squad. All of the features in the Spotify clients belong to a specific squad. The squad is responsible for that feature across all platforms – all the way from how it appears on an iOS device or a browser via the real time requests handled by the Spotify backend to the batch oriented data crunching that takes place in our Hadoop cluster to power features like recommendations, radio and search.

If one feature fails, the other features of our clients are independent and will continue to work. If there is a weak dependency between features, failure of one feature may sometimes lead to degradation of service of another feature, but not to the entire Spotify service failing.

Since all the users are not using all the features at the same time, the number of users that has to be handled by the backend of a particular feature is typically much smaller than the number of users of the entire Spotify service.

Since all the knowledge around one particular feature is concentrated to one squad it is very easy to A/B test features, look at the data collected and take an informed decision with all the relevant people involved.

Feature partitioning gives scalability, reliability and an efficient way of focusing team efforts.

Backend Infrastructure

After partitioning our problem by feature, and giving a highly skilled cross functional squad the mission to take care of and work with that feature, the question now becomes, how do we build infrastructure that support that squad efficiently?

How can we make sure that the team can develop their features at breakneck speed without risking being blocked by other teams? How can our infrastructure solve the hard problems around scaling globally? I’ve already talked about our transparent code model, that always allows a team to go forward but there are other parts of the organization apart from the feature development squads.

In many organizations you have database administrators that take care of databases and their schemas, and you typically have to go through an operations department to get hardware allocated in data centers, etc. These special functions in the organization become bottlenecks when there are 100 squads simultaneously demanding their services. To solve this we are developing a backend infrastructure at Spotify that is fully self service. Fully self service means that any squad can start developing and iterating on a service in the live environment without having to interact with the rest of the organization.

To achieve this, we’ve needed to solve a range of problems across several different areas. I will cover a few important ones here.

Provisioning – When developing a new feature a squad typically needs to deploy this service in several locations. We are building infrastructure to enable the squad to decide for itself whether the service should be deployed in Spotify’s own datacenters or if the feature can use a public cloud offering. The Spotify infrastructure strives to minimize the difference between running in our own data centers and on a public cloud. In short you get better latency, and a more stable environment in our own data centers. On a public cloud you get much faster provisioning of hardware and much more dynamic scaling possibilities.

Spotify clients connecting to their closest datacenter.

Storage – Most features require some sort of storage, obvious examples being playlists and the “follow” feature. Building a storage solution for a feature that millions of people will use is not an easy task, and there are a lot of things that have to be considered: Access patterns, failover between sites, capacity, consistency, backups, degradation in the case of a net split between sites etc. There is no easy way to fulfill all those requirements in a generic way. For each feature the squad will have to create a storage solution that fits the needs of that particular service. The Spotify infrastructure offers a few different options for storage: Cassandra, PostgreSQL and memcached.

If the feature’s data needs to be partitioned, then the squad has to implement the sharding themselves in their services, however many services rely on Cassandra doing full replicas of data between sites. Setting up a full storage cluster with replication and failover between sites is complicated so we are building infrastructure to setup and maintain multi site Cassandra or postgreSQL clusters as one unit. For people building apps on the Spotify API there will be a storage as a service option that will not require any setup of any clusters. The storage as a service option will be limited to a very simple key-value store.

Messaging – Spotify clients and backend services communicate using the following paradigms: request-reply, messaging and pubsub. We have built our own low latency, low overhead messaging layer and are planning to extend it with high delivery guarantees, failover routing and more sophisticated load-balancing.

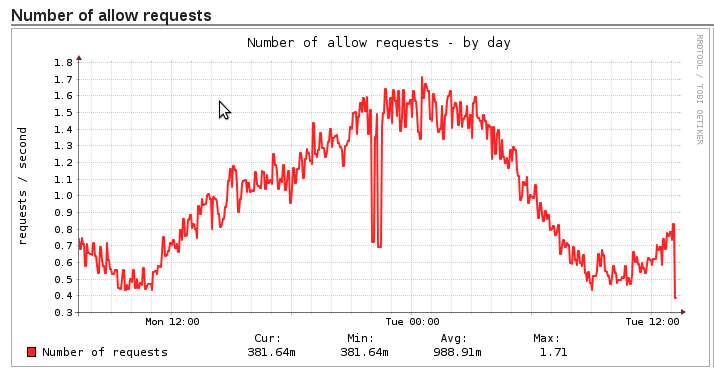

Capacity planning – The growth of Spotify drives a large amount of traffic to the backend. Each squad has to make sure that their features always scale to the current load. The squad can choose to keep track of this manually by monitoring traffic to their services and identify and fix bottlenecks and scale out as needed. We are also building an infrastructure that allows squads to scale their services automatically with load. Automatic scaling typically only works for bottlenecks that you are aware of, so there is always a certain level of human monitoring that the squad need to handle. Our infrastructure allows the easy creation of graphs and alerts to support this.

Insulation from other services – As new features and services are developed they tend to call each other in non trivial ways. It is very important that all squads feel that they can run at full speed while minimizing the risk of negatively affecting other parts of Spotify. To avoid this, our messaging layer has a rate limit and permissions system. Rate limits have a default threshold – this allows squads to call other services to try something out. If exceptionally heavy traffic is anticipated, squads would need to coordinate and agree how to handle this together. Different features are always run on separate servers or virtual machines to avoid having one misbehaving service taking down another.

Wrap up

As I mentioned in the beginning of this post, a lot of this is work in progress, and there are a lot of very interesting challenges coming our way. The view I’ve presented here represents a snapshot of how we see things at Spotify right now, and since we are addicted to continuous improvement, tomorrow we may well have changed some things…

And of course, if you feel this is interesting, have a look at our open positions.

Tags: Apache Cassandra, architecture, PostgreSQL