April 2, 2024

Engineering

Read about the magic behind the music & more.

Welcome to our official technology blog.

April 2, 2024

Data Platform Explained

As engineers working at Spotify, we frequently find ourselves explaining our robust data platform to fellow professionals who are contemplating [...]

Filter by:

-

-

March 5, 2024

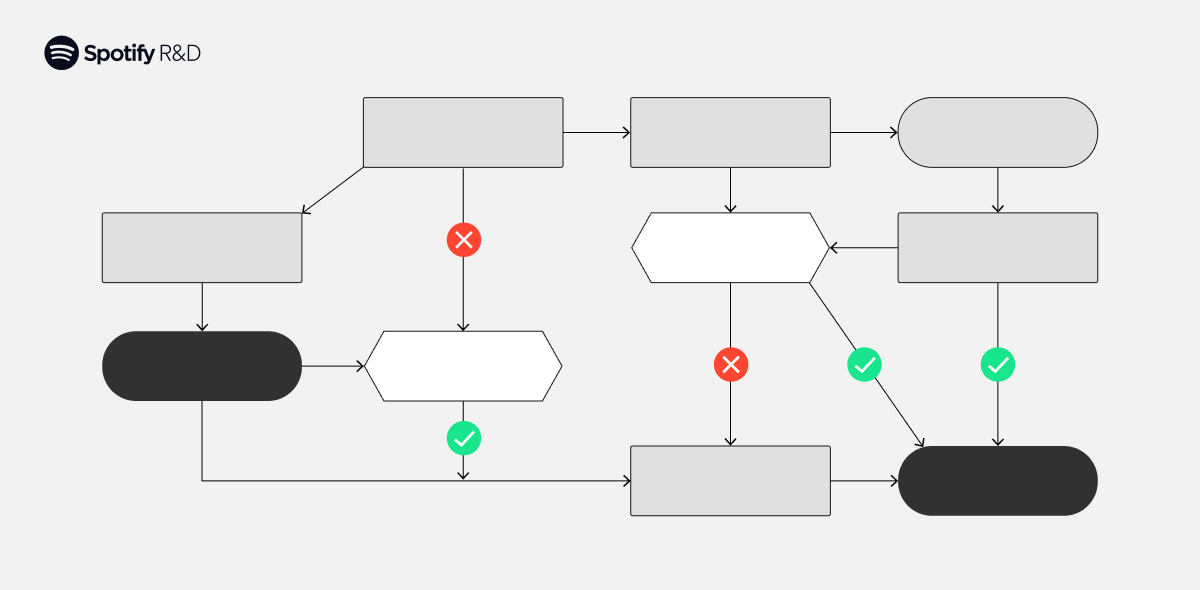

Risk-Aware Product Decisions in A/B Tests with Multiple Metrics

TL;DR We summarize the findings in our recent paper, Schultzberg, Ankargren, and Frånberg (2024),... -

February 7, 2024

Applying the Facade Pattern on Spotify for Artists

At Spotify, we’re dedicated to delivering a unified experience to our customers — which can somet... -

January 24, 2024

Exploring the Animation Landscape of 2023 Wrapped

Each year, we aim to elevate the Spotify Wrapped experience for our users, crafting captivating d... -

January 4, 2024

Q&A with the Maintainers of the Spotify FOSS Fund

TL;DR Let’s cap the year by putting a spotlight on some of the valuable work people outside of Sp... -

December 5, 2023

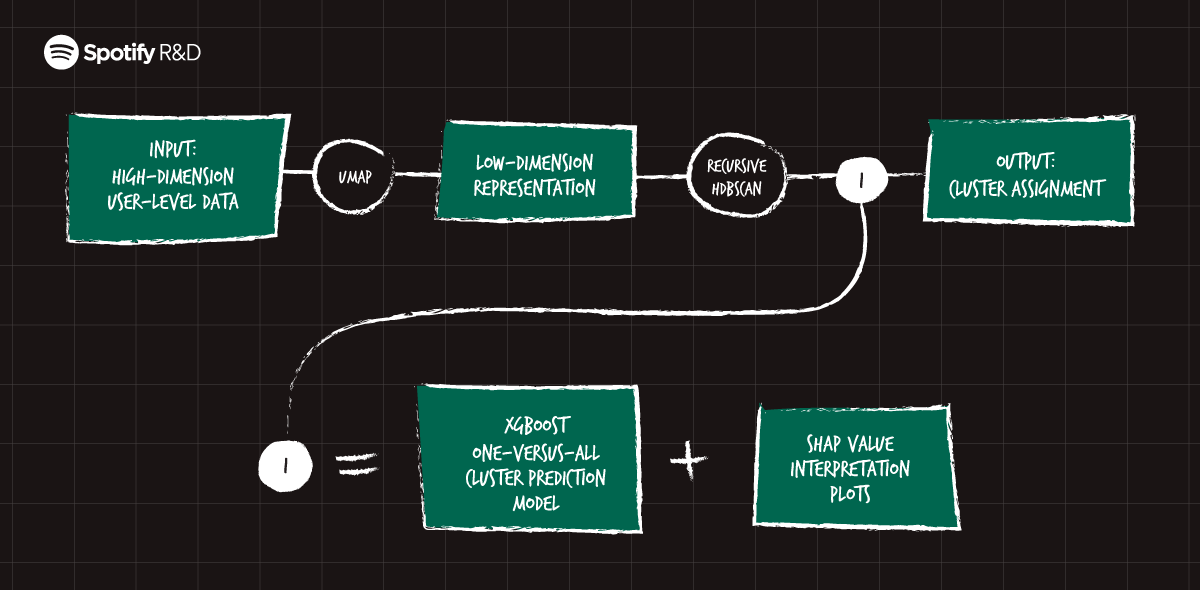

Recursive Embedding and Clustering

TL;DR Large sets of diverse data present several challenges for clustering, but through a novel a... -

November 14, 2023

The What, Why, and How of Mastering App Size

Our daily tasks as engineers often involve implementing new functionalities. Existing users get t... -

November 8, 2023

Spotify Wins CNCF Top End User Award for the Second Time!

This week at KubeCon + CloudNativeCon in Chicago, the Cloud Native Computing Foundation announced... -

November 7, 2023

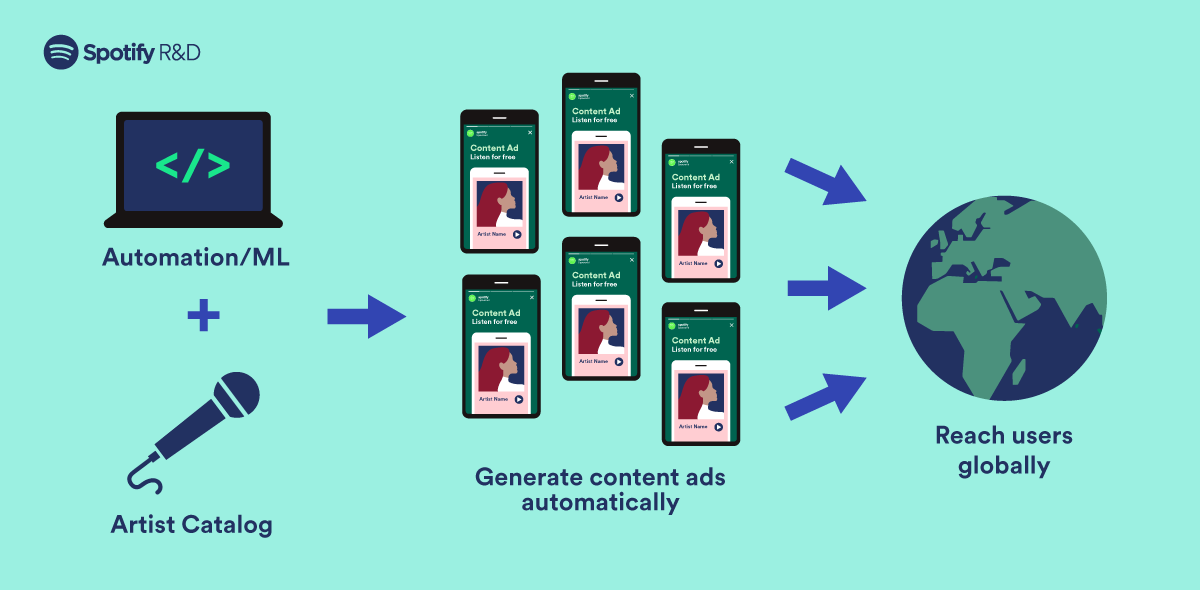

How We Automated Content Marketing to Acquire Users at Scale

Spotify runs paid marketing campaigns across the globe on various digital ad platforms like Faceb... -

October 25, 2023

Introducing Voyager: Spotify’s New Nearest-Neighbor Search Library

For the past decade, Spotify has used approximate nearest-neighbor search technology to power our... -

October 23, 2023

Announcing the Recipients of the 2023 Spotify FOSS Fund

TL;DR It’s back! Last year, we created the Spotify FOSS Fund to help support the free and open so... -

October 20, 2023

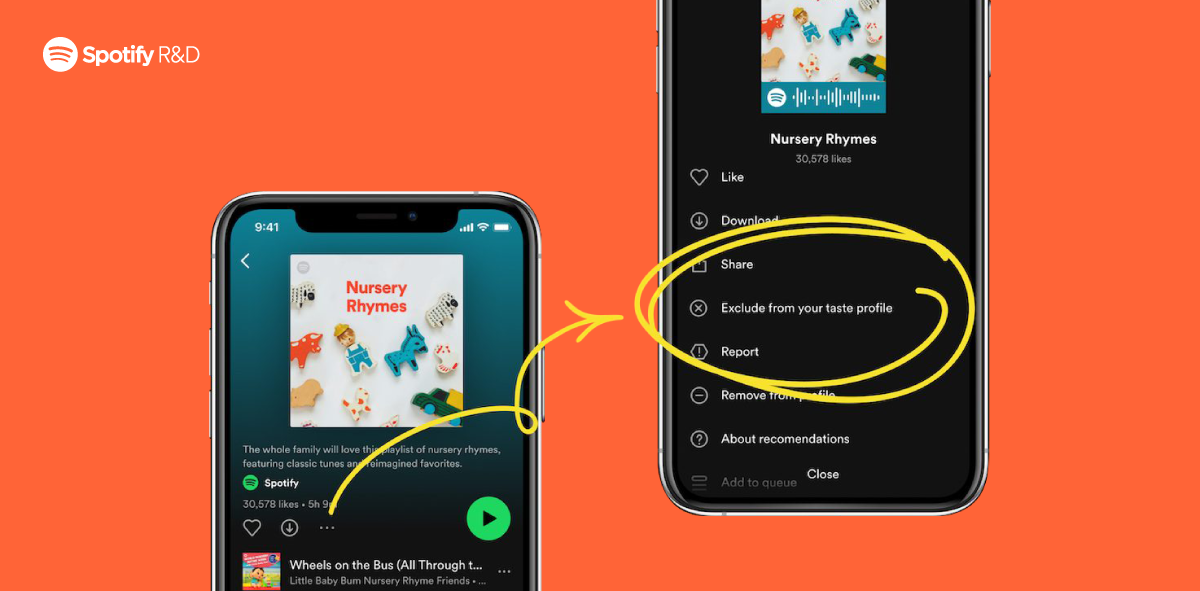

Exclude from Your Taste Profile

What is “Exclude from your taste profile”? Are you a parent forced to put the Bluey theme song... -

October 17, 2023

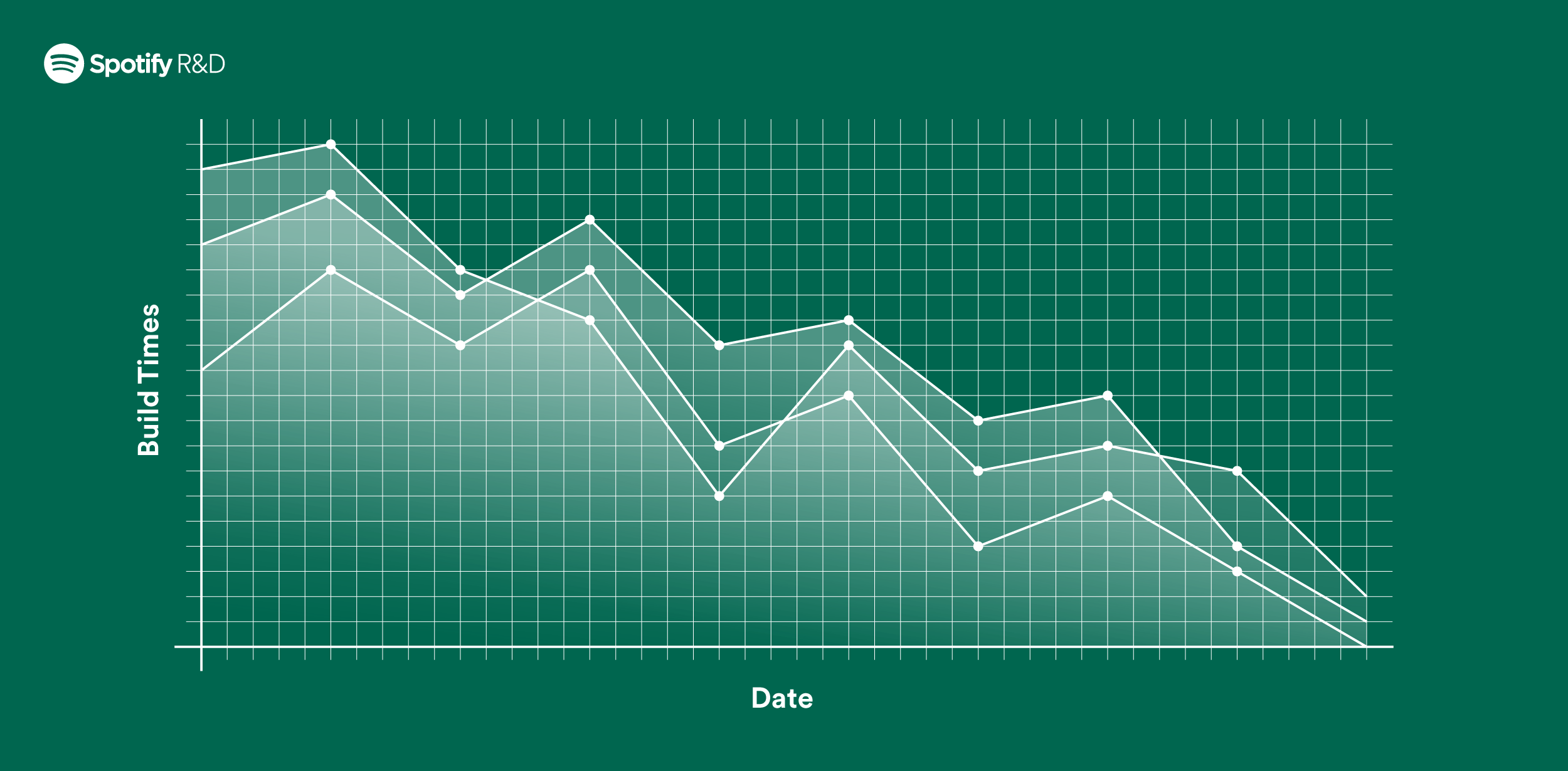

Switching Build Systems, Seamlessly

At Spotify, we have experimented with the Bazel build system since 2017. Over the years, the proj... -

October 5, 2023

Managing Software at Scale: Kelsey Hightower Talks with Niklas Gustavsson about Fleet

How does Spotify manage a sprawling tech ecosystem made up of 500+ squads managing over 10,000 so... -

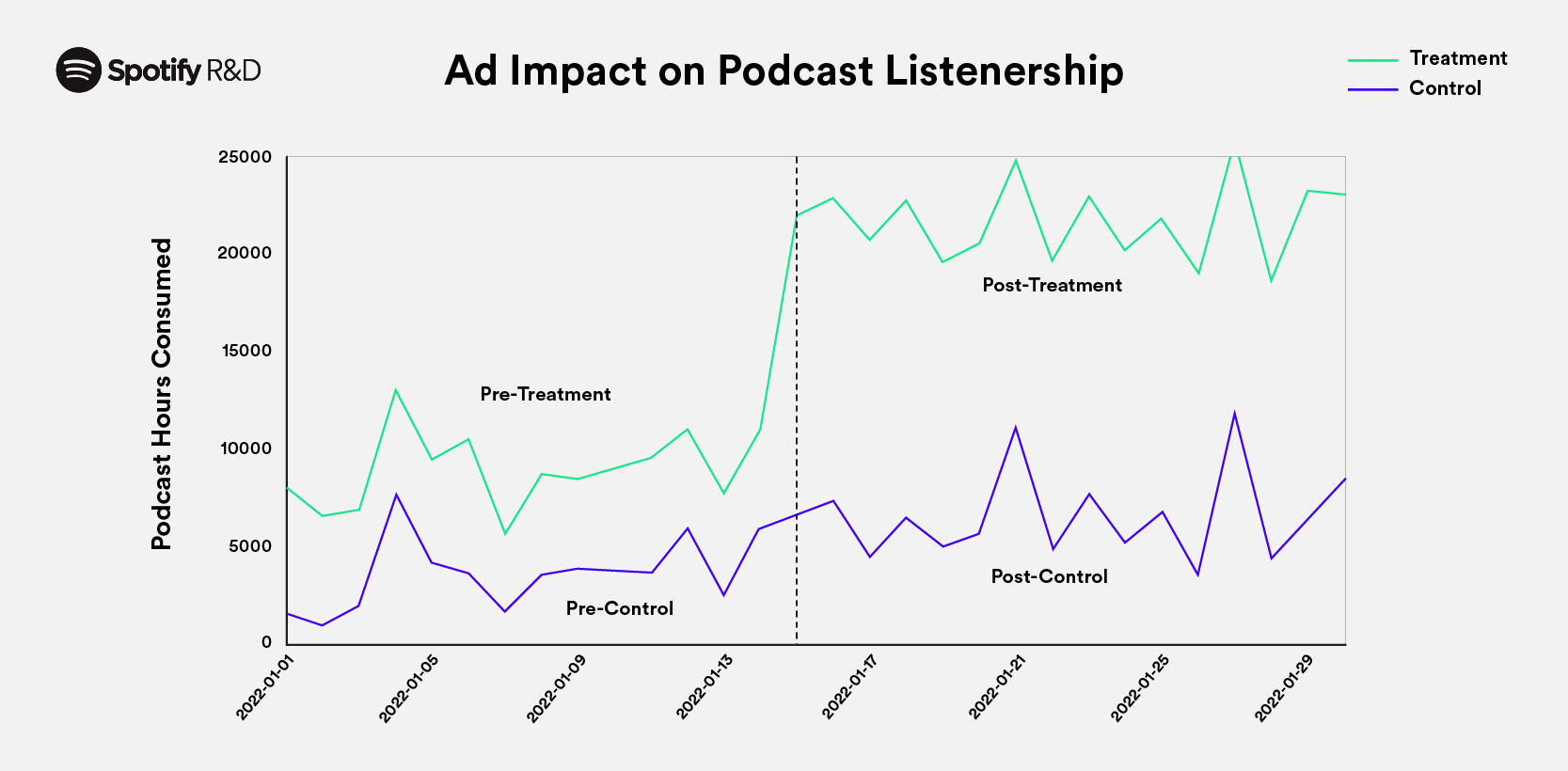

September 28, 2023

How to Accurately Test Significance with Difference in Difference Models

When we want to determine the causal effect of a product or business change at Spotify, A/B testi... -

August 24, 2023

Encouragement Designs and Instrumental Variables for A/B Testing

At Spotify, we run a lot of A/B tests. Most of these tests follow a standard design, where we ass... -

August 16, 2023

Experimentation at Spotify: Three Lessons for Maximizing Impact in Innovation

As companies mature, it’s easy to believe that the core experience and most user needs have been ... -

August 3, 2023

Coming Soon: Confidence — An Experimentation Platform from Spotify

TL;DR: Spotify is releasing a new commercial product for software development teams: a version of... -

July 25, 2023

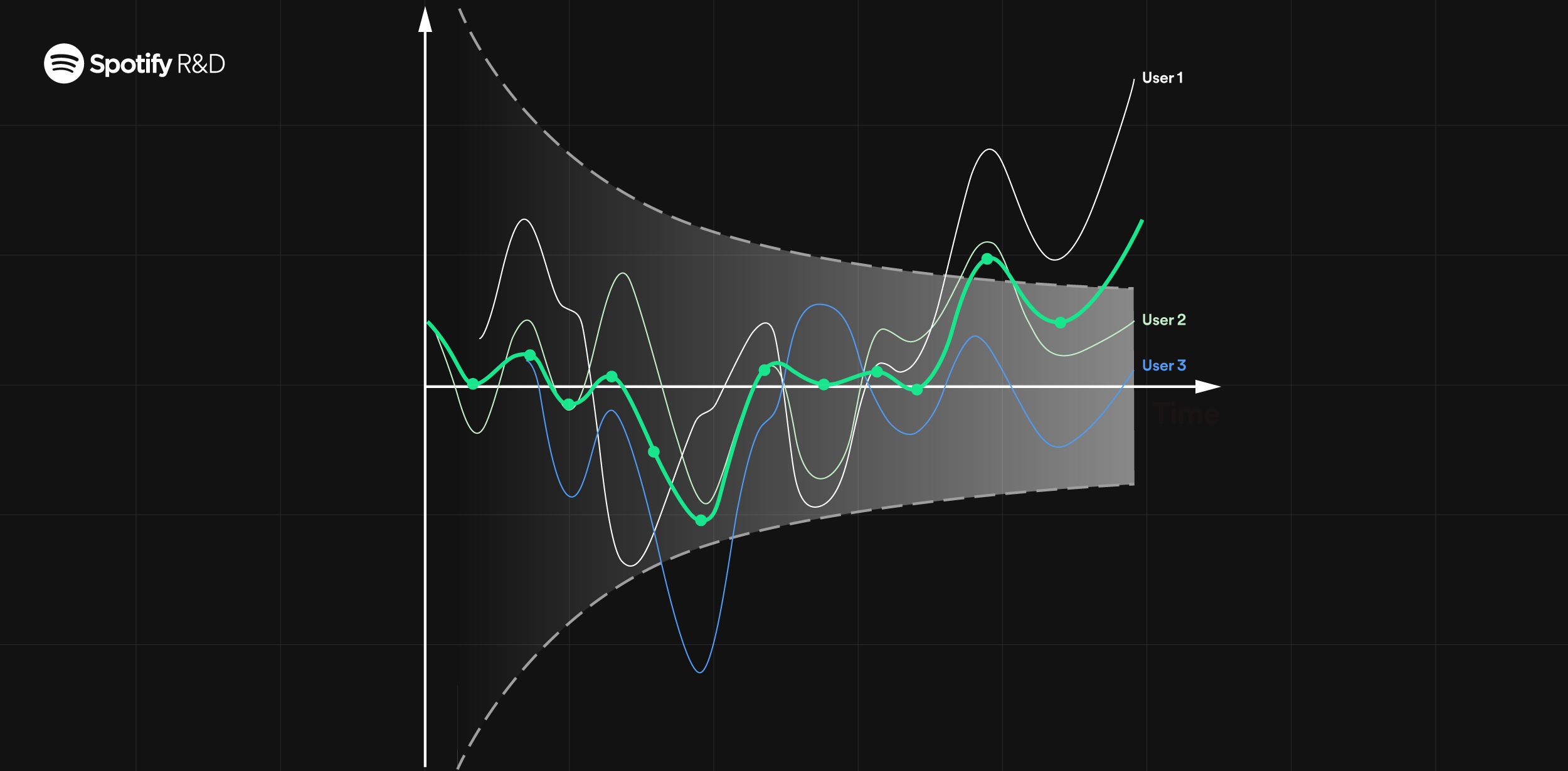

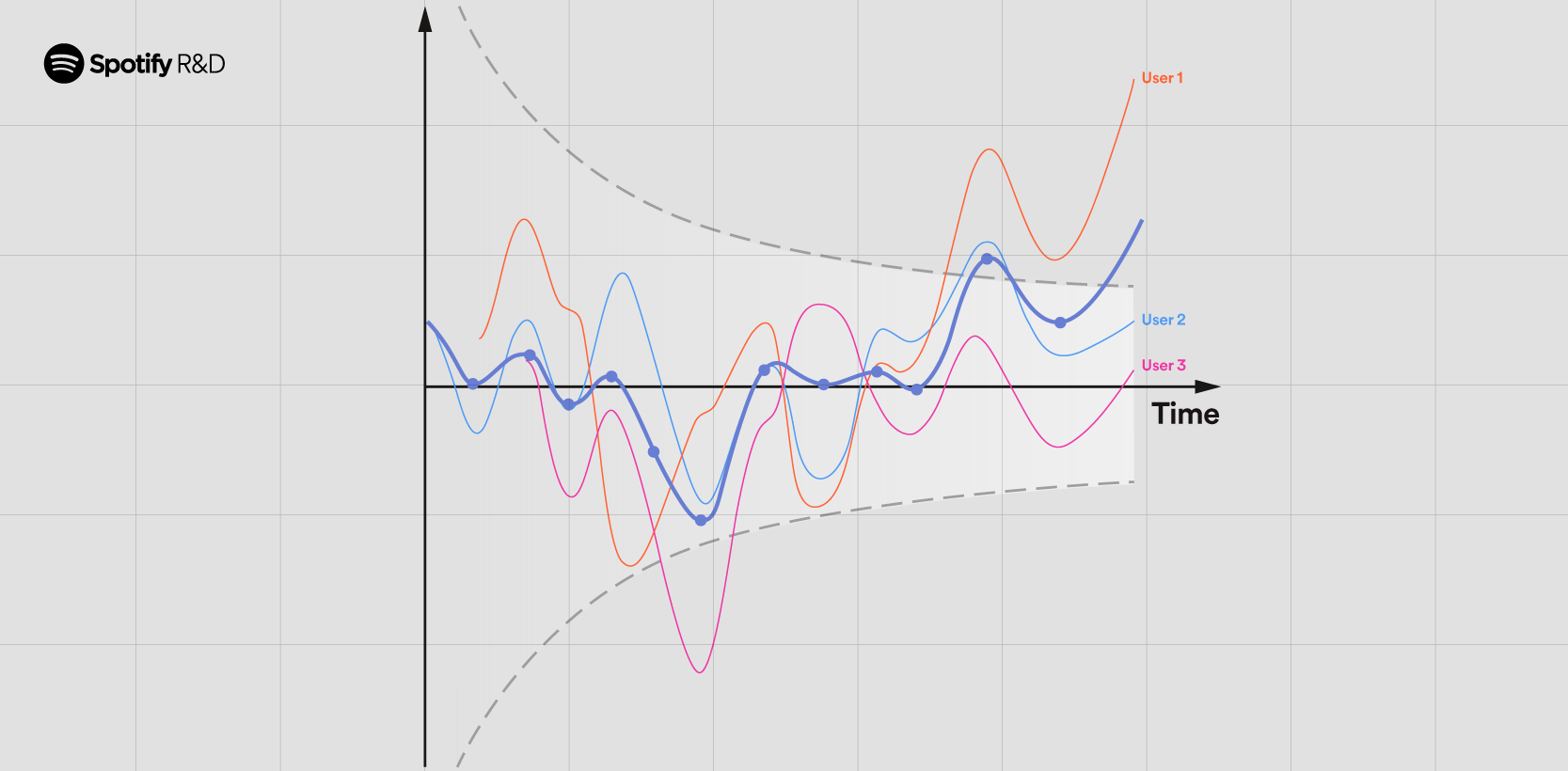

Bringing Sequential Testing to Experiments with Longitudinal Data (Part 2): Sequential Testing

In Part 1 of this series, we introduced the within-unit peeking problem that we call the “peeking... -

July 18, 2023

Bringing Sequential Testing to Experiments with Longitudinal Data (Part 1): The Peeking Problem 2.0

Spotify’s approach to challenges in sequential testing with longitudinal data At Spotify... -

June 28, 2023

Experimenting with Machine Learning to Target In-App Messaging

Messaging at Spotify At Spotify, we use messaging to communicate with our listeners all over t... -

June 22, 2023

Analyzing Volatile Memory on a Google Kubernetes Engine Node

TL:DR At Spotify, we run containerized workloads in production across our entire organization in ... -

June 15, 2023

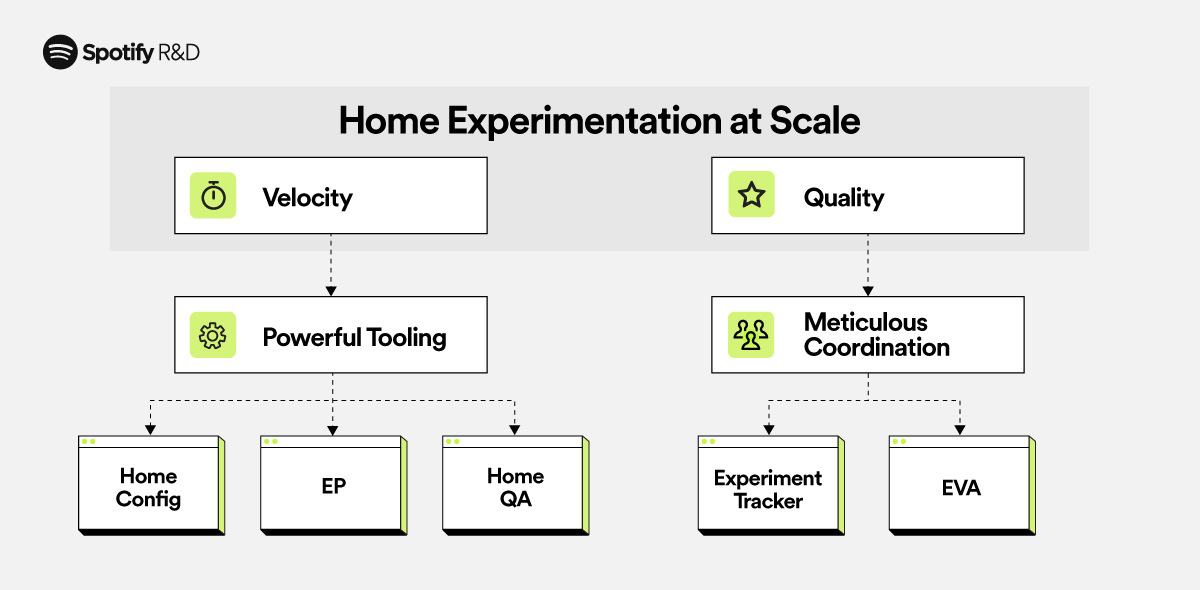

Experimenting at Scale, the Spotify Home Way

In the fast-paced world of streaming, personalization plays a vital role in enhancing user experi... -

May 25, 2023

Multiple Layers of Abstraction in Design Systems

Check out our previous post — Customization & Configuration in Design Systems — for more abou...

](https://storage.googleapis.com/production-eng/1/2024/01/EN212-FOSS-Fund-2023-QA_QA-1200x590_v2.png)

](https://storage.googleapis.com/production-eng/1/2023/10/EN201_FOSSFund23Winners_blog-post_Op-2symmetrical-1200x-590.png)

](https://storage.googleapis.com/production-eng/1/2023/10/EN205_Kelsey_ngn_1200x590.png)